A guide to game project planning

The complete planning stack for small game development teams

Heads up: this is a long article. We cover a lot of ground on this one.

A small team, talented and motivated, has a game concept they genuinely believe in. They've talked about it, sketched it out, argued over it. They're excited - and why wouldn't they be? It's a great idea. So they open their laptops, spin up a repo, agree on a version of Unreal and start making things. And for a while, this feels like the right call. They're building, they're moving, they're making a game. This is what it's all about.

Then, somewhere around the four or five month mark in a say, eighteen month dev cycle, the wheels start wobbling. Nobody quite agrees on what the next thing is, or why. The to-do list is a chaos of notes, ideas and Miro diagrams dumped from every direction. Some things got built twice. Some things that should have been built first weren't built at all. The engineer is blocked waiting on art that nobody knew was a dependency. The designer rewrote a system the programmer had already implemented, because the spec wasn't clear. The game director is adding new features in real-time, because they saw something at a demo that they liked.

Nobody is doing anything wrong, exactly. There’s just no plan. No rhyme or reason. Planning feels slow and it’s not exactly the most fun of activities, but in my experience, the studios that invest in planning are the ones that move with intention and end up being faster when it really matters.

I want to pragmatically walk you through the full arc of project planning for a video game, from the structural logic that sits above everything else to the first sprint or Kanban board that gets work actually moving. We’ll use a fictional game called Ironfall (it shames me to admit how long I spent picking that name) as our running example throughout: a five-person team, PC-first, action-RPG, pre-funding, bootstrapped. Small enough to be realistic, complex enough to make the examples meaningful.

A few things we will not cover here, because they deserve their own dedicated treatment (and I’ve written about some of these already): staffing planning, risk planning, quality planning, communication planning, financial planning and team chartering. These are not optional topics. They're essential components of a complete project plan. But they're also big enough to warrant real depth and we'd be doing them a disservice by compressing them into an already long article. Look for those separately.

Let’s start at the top.

The planning onion: how planning is structured

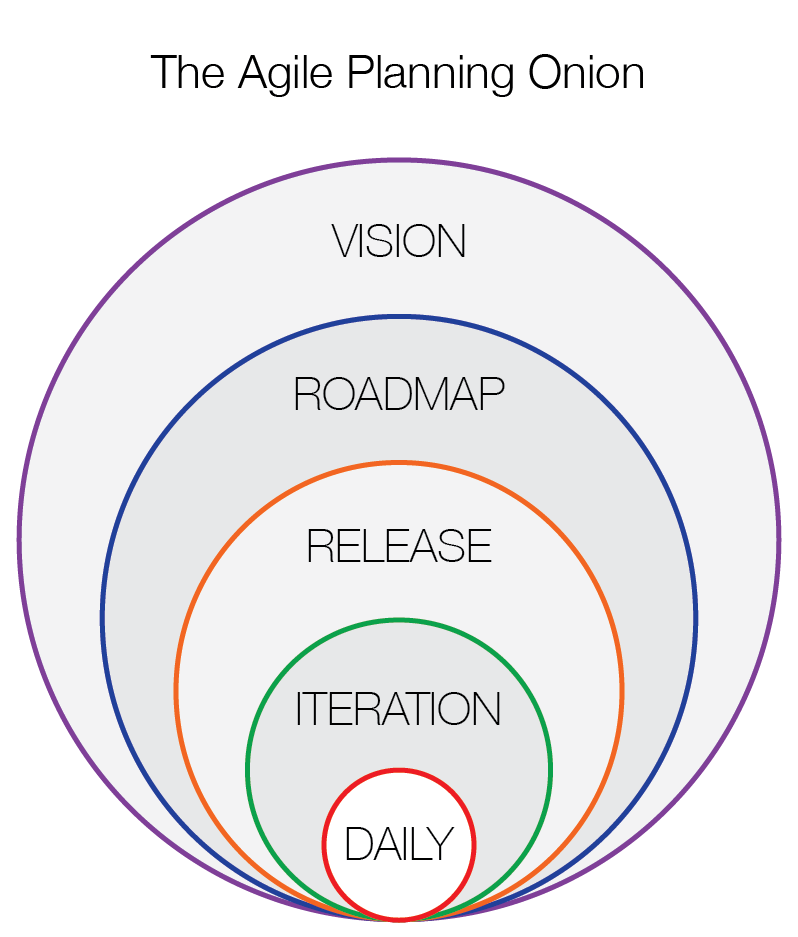

There’s a model that Mike Cohn describes in Agile Estimating and Planning that I find genuinely useful as an orientation tool: the agile planning onion. The image is simple: planning happens at multiple levels, each nested inside the others, from the widest horizon down to the narrowest. Vision is the outermost ring. Then roadmap. Then release. Then iteration. Then the day of work.

The mistake most teams make is trying to do only one of these levels - usually the iteration or the day - and treating that as "planning." It isn't. It's scheduling. Planning is the whole stack.

Here's what that distinction actually costs you in practice. A team that only plans at the iteration level knows what they're doing this sprint. They don't know whether this sprint's work is the right work, whether it's sequenced correctly relative to what comes next, or whether the sum of all their sprints adds up to something that will be ready when it needs to be ready. They feel productive. They're not necessarily converging though. The disconnect between daily execution and the larger shape of the project is one of the most common - and most expensive - planning mistakes in small studios.

I’ve written separately about vision, what it is, how to build it, why it’s the most important alignment tool you have. If your vision isn’t clear yet, start there and come back. Everything below that assumes you have a coherent vision: you know what game you’re making, why, what’s unique about it, what the pillars are, who it’s for, what the experience should feel like and what you won’t do.

What we’re building in this article is everything between that vision and actual daily work.

So, starting from the vision and working down:

Roadmap. The multi-month shape of the project. What phases, what major milestones, what big bets in what order. The answer to "where are we going and roughly when?" This is your strategic horizon. For Ironfall: first playable by week twelve, pre-production done by week twenty, production through the end of the year. Nothing at this level says what work gets done. It's a sequence of destinations with approximate timing attached.

Release. What each of those destinations actually is. When the roadmap says "first playable by week twelve," the release is the thing you're delivering in that first playable: the specific goal it needs to achieve, what done looks like and the full body of work required to get there. Roadmap and release are easy to conflate because they both involve milestones and timing, but the roadmap answers "when" and the release answers "what." You need both.

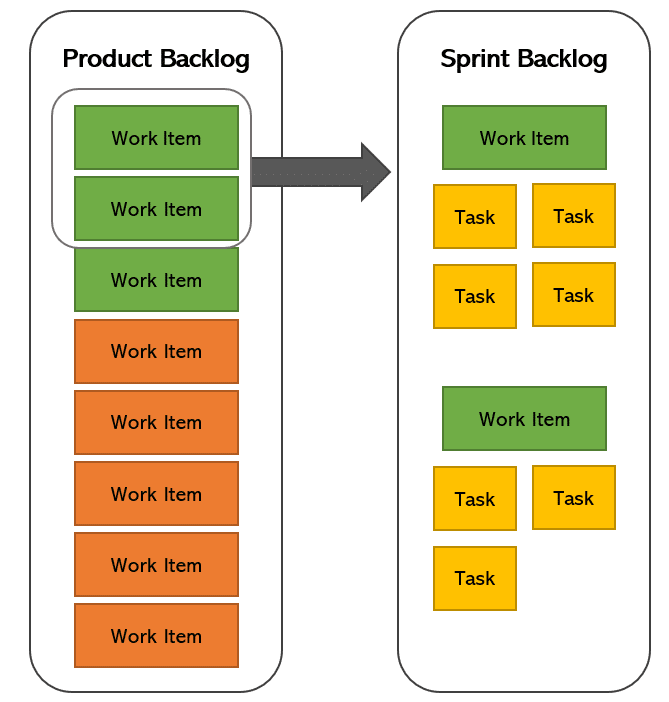

Iteration. A sprint's worth of committed work. The two-week window (or whatever cadence you use) where the team selects a chunk of the release backlog and commits to completing it. This is where stories get pulled, work gets done and actual velocity data gets generated.

Day. Individual tasks and daily priorities. This mostly emerges from the iteration plan.

Each level should inform the one below it. The roadmap shapes the release plan. The release plan shapes what goes into iterations. The iteration plan shapes the day. When they’re disconnected, when the daily work has no visible relationship to the release, which has no visible relationship to the roadmap, you get a team that is busy and not necessarily converging and delivering valuable results.

Step one: define the high-level goals for the project

Before you can build a useful roadmap, you need to be honest about what success actually looks like for this project. Not in a fuzzy, aspirational way. In a concrete, answerable way.

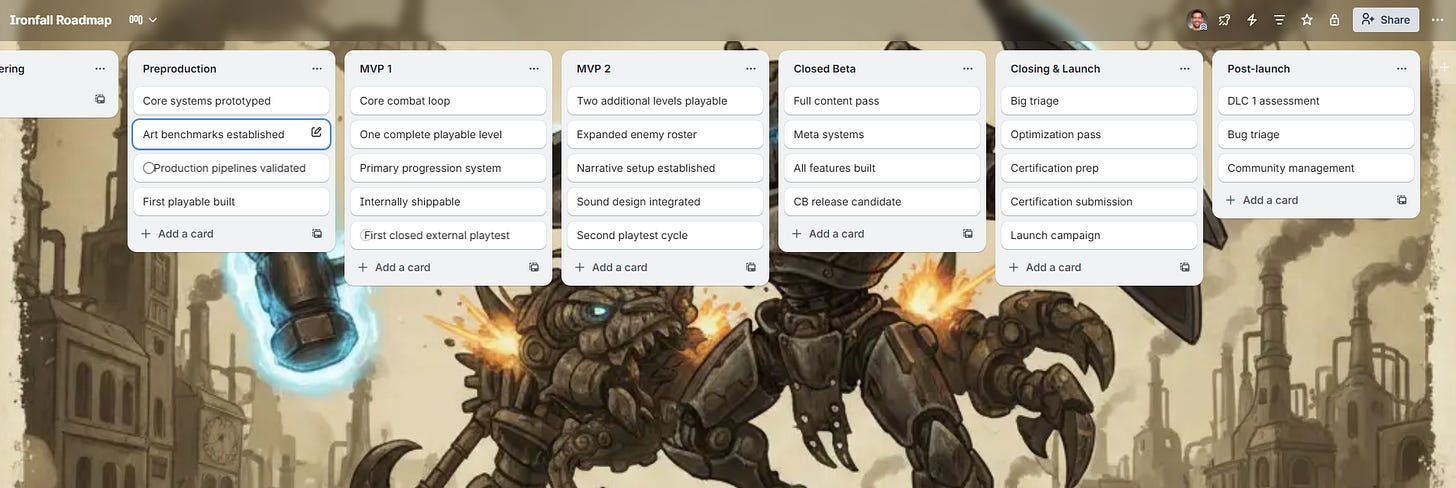

For Ironfall, the team might define their high-level project goals like this:

Prove out our combat system well enough within 14 months to pitch a publisher

Build a demo before next year’s Steam Next Fest that gets us to 30k wishlists before Early Access

Ship a polished, complete PC build on Steam, rated at or above a 7/10 by players in the target genre

Ship a 6-8 hour single-player experience that demonstrates our studio can execute a complete narrative arc

Maintain a team of 5, no crunch, sustainable pace throughout

These are not the same as the creative pillars or the vision. These are project outcomes - the things that define whether this endeavor succeeded as a project, separate from whether the game is good (though they're related). They answer: what are we trying to achieve and how will we know if we did?

Goals at this level should be:

Specific enough to generate decisions. “Make a great game” is not a goal. “Ship a feature-complete, stable, well-optimized build by Q3 of next year” is a goal. Specificity forces honesty.

Measurable or at least evaluable. You should be able to look at a goal and say, clearly, whether it was met or not. If you can’t, it’s not a goal, it’s an expression of hope.

Grounded in your actual constraints. A five-person bootstrapped team setting a goal of “achieve 150K wishlists before launch” is either a stretch target or wishful thinking, and you should know which one it is when you write it down.

Aligned with each other. If one goal says “ship fast” and another says “achieve AAA production values,” those are in tension and you need to decide how you’re resolving that tension before it surfaces in production as a fight.

Write these down. Put them somewhere visible. Socialize them regularly. They become the reference point for every hard tradeoff you’ll face in the next year or two of development.

Step two: outline the roadmap

A roadmap is not a schedule. A schedule tells you when specific tasks will be done. A roadmap tells you what phases the project moves through and in what rough order. It operates at the level of months and milestones, not weeks and tasks.

For game development, the roadmap should normally map to the development phases. If you haven't read my piece on game development phases it might be worth doing that before going further. The phase structure I use there is the foundation for what a roadmap looks like in practice.

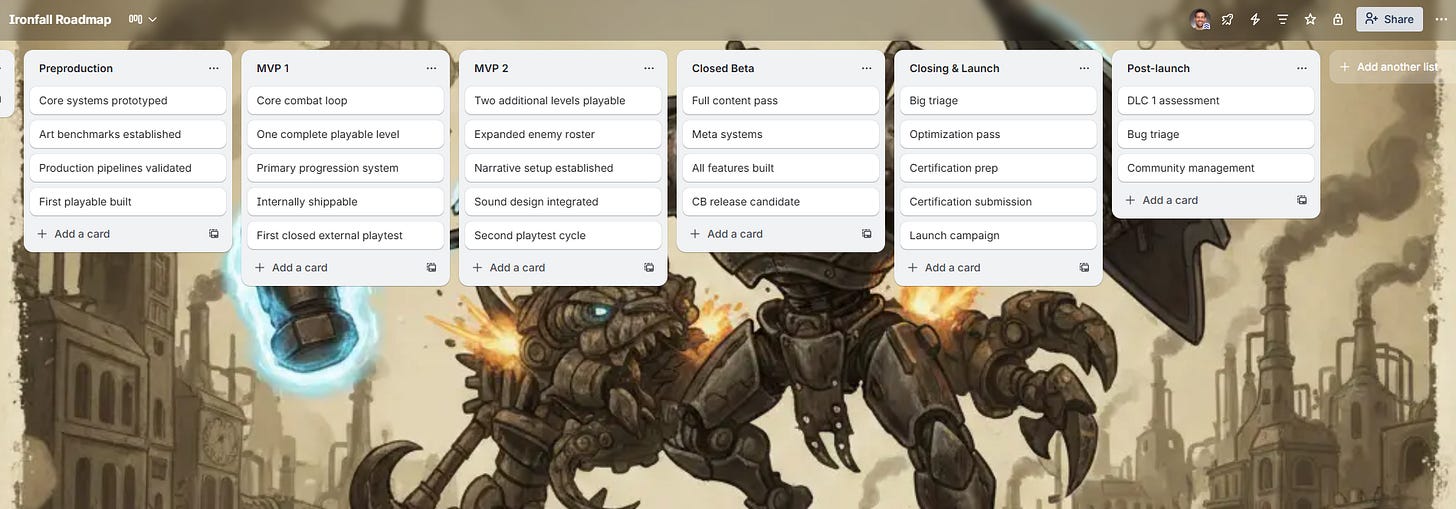

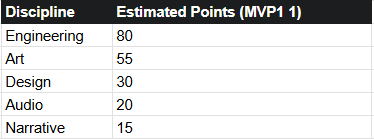

At the roadmap level, Ironfall’s planning might look something like this:

Months 1-2: Pre-production. Core systems prototyped, art benchmarks established, production pipeline validated, first playable built. Team aligned on scope, tooling and working agreements.

Months 3-6: Production - MVP 1. Core combat loop, one complete playable level, primary progression system. Internally shippable. First closed external playtest.

Months 7-9: Production - MVP 2. Two additional levels, expanded enemy roster, narrative setup established, sound design integrated. Second playtest cycle.

Months 10-12: Production - MVP 3. Full content pass, meta systems (inventory, upgrades), all features built. Closed beta candidate.

Months 13-15: Closing. Bug triage, optimization, certification prep, launch campaign. No new features.

That's a roadmap. Rough enough to breathe, specific enough to plan against and have intelligent conversations around.

A few things worth naming about roadmaps:

They’re not commitments to dates. They're your best current thinking about sequencing and duration, informed by what you know today. When new information arrives - a feature turns out to be harder than expected, a playtest reveals a core loop problem that needs addressing - the roadmap changes. That's not a planning failure. That's planning working as intended.

They should have explicit milestones. Not just “MVP 1 done” but a crisp definition of what “MVP 1 done” means. Unambiguous criteria that anyone on the team could evaluate. We’ll come back to this.

They need to account for phases and transitions. Moving from pre-production to production is not a neutral moment. It requires certain things to be true: pipelines built, benchmarks established, documentation in place. The roadmap should mark those transitions and the conditions for crossing them.

In a planning tool like say, Trello, a roadmap can live on its own board - a single board with a list for each major phase and cards representing the key milestones and deliverables within each phase. This isn’t where you track daily work. It’s where you track the shape of the project.

Step three: define milestones

Milestones are the checkpoints on your roadmap. They mark moments where you pause, verify the state of the project and make a conscious decision about whether to continue, adjust or stop.

Most teams treat milestones loosely, as rough dates attached to fuzzy descriptions. “Alpha by September” without any clear definition of what Alpha means. This is a reliable way to reach September, call it Alpha and find out three months later that you weren’t actually where you thought you were.

A useful milestone has three components:

A name and date. Even if the date is approximate, you need one. A milestone without a target date is an aspiration, not a checkpoint.

Explicit completion criteria. Not “all features built” but: which features, to what standard, verified how? A milestone that any team member could independently evaluate as met or not met.

A Go/No-Go gate. An honest answer to: are we ready to move to the next phase? This is where you catch accumulated problems before they become production crises.

For Ironfall, the MVP 1 milestone might look like this:

MVP 1 - Target: End of Month 6

Completion criteria:

Core melee combat system implemented, integrated and stable in build

One complete level built to benchmark visual standard, playable start to finish

Basic progression system (XP, leveling, stat application) functional

No P1 or P2 bugs open

Build passes a full internal QA sweep

Milestone playtest conducted with at least 6 external players

Go criteria:

Combat feels engaging and responsive per playtest feedback

No critical technical blockers unresolved

Team confidence in the plan for MVP 2 is high

Budget and schedule are within acceptable variance of the plan

That specificity is uncomfortable to write, because it exposes you. If you write it down clearly, you know whether you hit it or not, and there's nowhere to hide. That discomfort is the point.

Those first three steps are about the shape of the project over time - where you’re going and how you’ll know when you’ve arrived. The next steps shift to a different question: what is the work, exactly? The roadmap tells you the destination. The following steps are about understanding everything that has to happen to get there.

Step four: understand the work breakdown structure

Here is where we go from roadmap to actual scope definition.

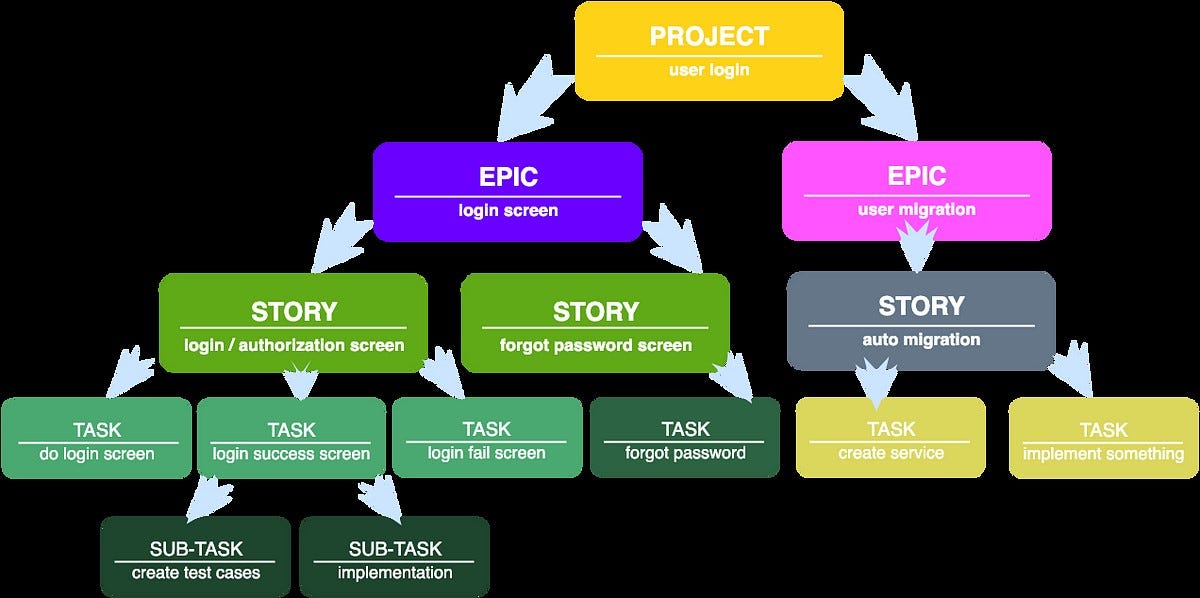

A Work Breakdown Structure, or WBS, is one of the most valuable practical tools in project management. The PMBOK describes it as a hierarchical decomposition of all the work required to complete the project. In plain English: you start at the finished game and work backward, breaking it down into every major deliverable, sub-deliverable and work package that needs to exist for the game to be complete.

Most teams find this the most uncomfortable part of the planning process, because it makes the scope explicit in a way that reveals how much there actually is to do. Which is, of course, exactly why it's valuable. Better to see the mountain now than to discover its true height at the halfway point.

For games, this translates naturally to an Epics > User Stories > Tasks hierarchy - which is the agile representation of the same idea.

Epics are a large body of thematically related work, too big to complete in one sitting and usually spanning multiple disciplines over multiple iterations. They represent a major feature, system or content area. Think: combat system, progression system, level 1, audio implementation, UI & HUD.

User Stories decompose epics into specific, valuable increments that a team can actually complete in a sprint or a reasonable unit of time. Each story is framed from the perspective of the player or user, capturing what they need and why. We’ll get into the anatomy of a good user story shortly.

Tasks are the last piece of the work hierarchy. They’re specific technical actions that need to happen to complete a story. These live at the level of individual work items that might take a day or two.

For Ironfall, the Combat System epic might break down like this:

Epic: Combat System

User Story: As a player, I can perform a light attack that deals damage and plays an appropriate animation, so that combat feels responsive and satisfying

User Story: As a player, I can perform a heavy attack with longer wind-up and higher damage, so that there’s meaningful tactical choice in combat

User Story: As a player, I have a stamina bar that depletes on attacks and recovers over time, so that I have to manage resources in combat

User Story: As a player, I can dodge-roll to avoid incoming attacks, so that positioning and timing are meaningful

User Story: As a player, I receive clear visual and audio feedback when I take damage, so that I understand the state of combat

Each of these is a story, not a task. The tasks live inside each story - things like "implement dodge animation state machine," "hook stamina depletion to attack button press," "integrate SFX trigger on hit confirmation."

The WBS is your scope baseline. Once it exists, anything added to the game is explicitly a scope change, and scope changes have costs, which means they require a real decision.

Step five: write good user stories

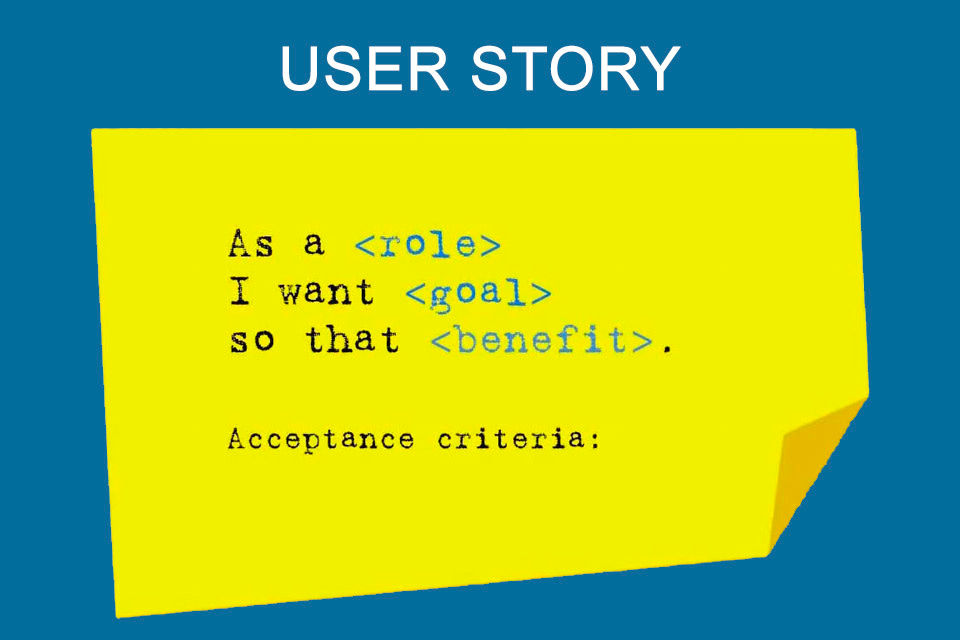

User stories are not task descriptions. They're not bug reports. They're not feature requests. They are small, specific, valuable expressions of what someone needs from the system and why.

The standard format, from Mike Cohn’s User Stories Applied and widely reinforced by agile practice, is:

As a [type of user or player], I [need or want something], so that [I can achieve some goal or experience some outcome].

The “so that” is the part most people skip. It’s also the most important part. Without it, you don’t know whether a story was successfully implemented - you just know whether the technical work was done. With it, you have a way to evaluate whether the implementation actually serves its purpose.

What makes a user story good? The INVEST criteria - which has been around long enough that I’m slightly embarrassed to still be citing it - remains genuinely useful:

Independent - ideally, the story can be developed and tested without requiring another specific story to be done first. Dependencies exist, but stories should minimize them.

Negotiable - the story describes an outcome, not a solution. The specific implementation is a conversation between game director and relevant discipline developers, not a prescription.

Valuable - the story delivers something that matters to a player or to the project. A story with no clear value for a player or stakeholder is a task masquerading as a story.

Estimable - the team should be able to estimate it. Stories that can’t be estimated are usually too large or too poorly understood.

Small - a story should be completable within a sprint. If it can’t be, it needs to be broken down further.

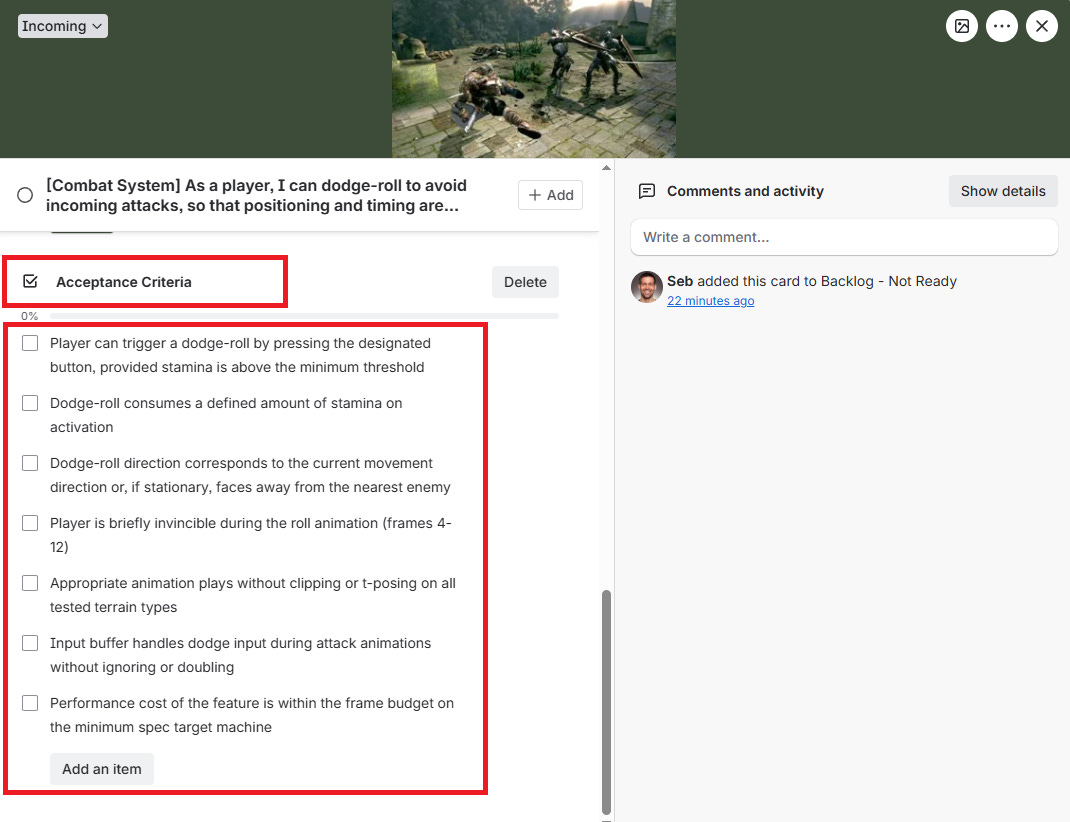

Testable - you can verify, objectively, whether the story was done. This is where acceptance criteria come in.

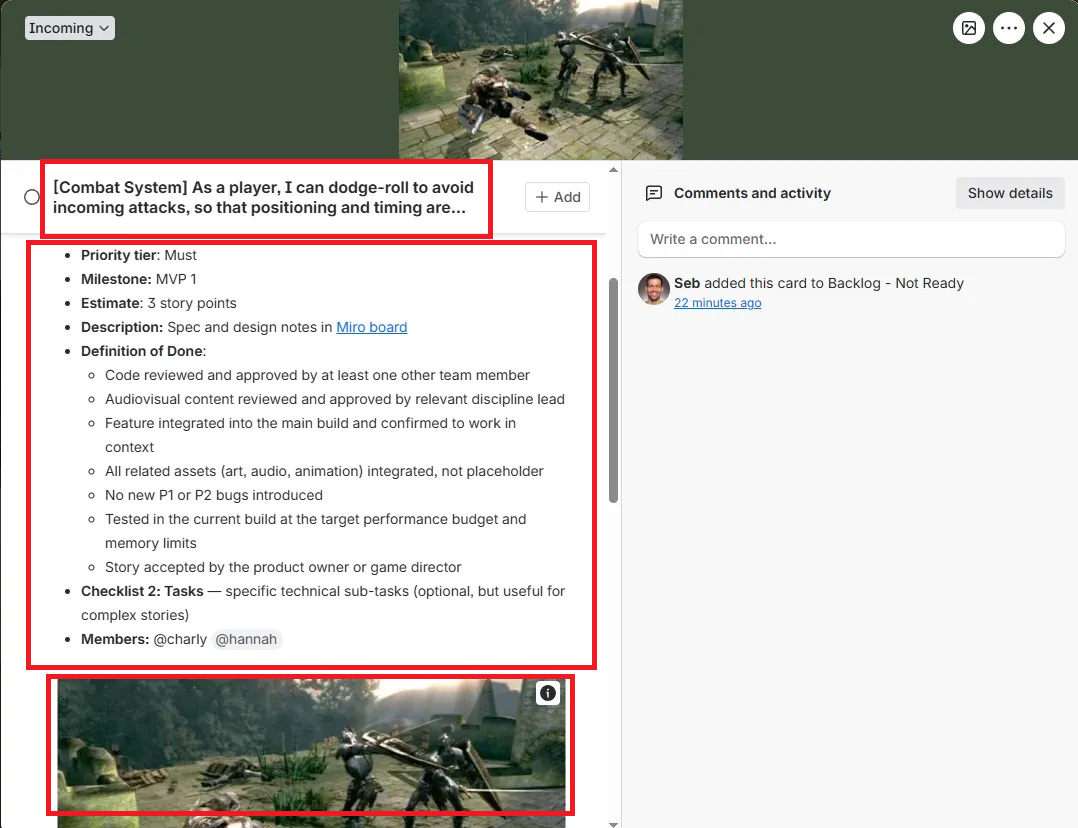

Step six: articulate conditions of done and acceptance criteria

Every user story needs two things before it’s ready to be worked on: a Definition of Done that applies to all stories on the project, and acceptance criteria specific to this story.

The Definition of Done answers: what does “complete” mean for any piece of work on this project? This is a team-wide agreement, not a per-story invention. A typical Definition of Done for Ironfall might be:

Code reviewed and approved by at least one other team member

Audiovisual content reviewed and approved by relevant discipline lead

Feature integrated into the main build and confirmed to work in context

All related assets (art, audio, animation) integrated, not placeholder

No new P1 or P2 bugs introduced

Tested in the current build at the target performance budget and memory limits

Story accepted by the product owner or game director

That last line matters. A story isn’t done when the developer says it’s done. It’s done when someone has evaluated the implementation against the acceptance criteria and confirmed it meets them. This distinction sounds bureaucratic. In practice, it prevents enormous amounts of debt from accumulating silently.

Acceptance criteria are the specific conditions that must be true for this particular story to be accepted as complete. They should be written before work begins, in a format that makes evaluation unambiguous.

For the dodge-roll story above in our Ironfall combat system:

Acceptance Criteria:

Player can trigger a dodge-roll by pressing the designated button, provided stamina is above the minimum threshold

Dodge-roll consumes a defined amount of stamina on activation

Dodge-roll direction corresponds to the current movement direction or, if stationary, faces away from the nearest enemy

Player is briefly invincible during the roll animation (frames 4-12)

Appropriate animation plays without clipping or t-posing on all tested terrain types

Input buffer handles dodge input during attack animations without ignoring or doubling

Performance cost of the feature is within the frame budget on the minimum spec target machine

These are conditions, not descriptions. They’re the basis for a conversation between developer and product owner at the moment of review.

Step seven: build the backlog

The backlog is not a list of everything you want in the game. It is a prioritized, estimated, ready-to-work set of user stories that represents the known plan for the project at this moment.

Building the backlog properly takes time and requires a little discipline. Here’s how I think about it:

Start with epics and then break them down. Don’t try to write every story before you have the epics clear. The risk is fragmentation - spending days writing stories for a system that might get cut or restructured at the epic level. Get the epic structure right first, then decompose the highest-priority epics into stories.

Not everything needs to be story-ready immediately. Future epics can stay at the epic level until they move closer to the work horizon. The practice of keeping near-term work detailed and far-term work rough is called progressive elaboration, and it’s one of the more honest things agile planning does - it acknowledges that your understanding of distant work is inherently lower-fidelity.

Stories need to be "ready" before they're "done." Ready means: the story is written, estimated, has acceptance criteria and has no unresolved dependencies that would block it from starting. In agile practice this is sometimes called the Definition of Ready. It's the complement to the Definition of Done. A story that isn't ready shouldn't be pulled into a sprint.

Step eight: prioritize the backlog

Backlog prioritization is where a lot of teams lean on MoSCoW - Must have, Should have, Could have, Won't have - and stop there. MoSCoW is a fine starting framework, but it has a tendency to produce arguments at the Must/Should boundary, and it doesn't help you sequence within a priority tier. Knowing that ten things are all Musts doesn't tell you what to build first.

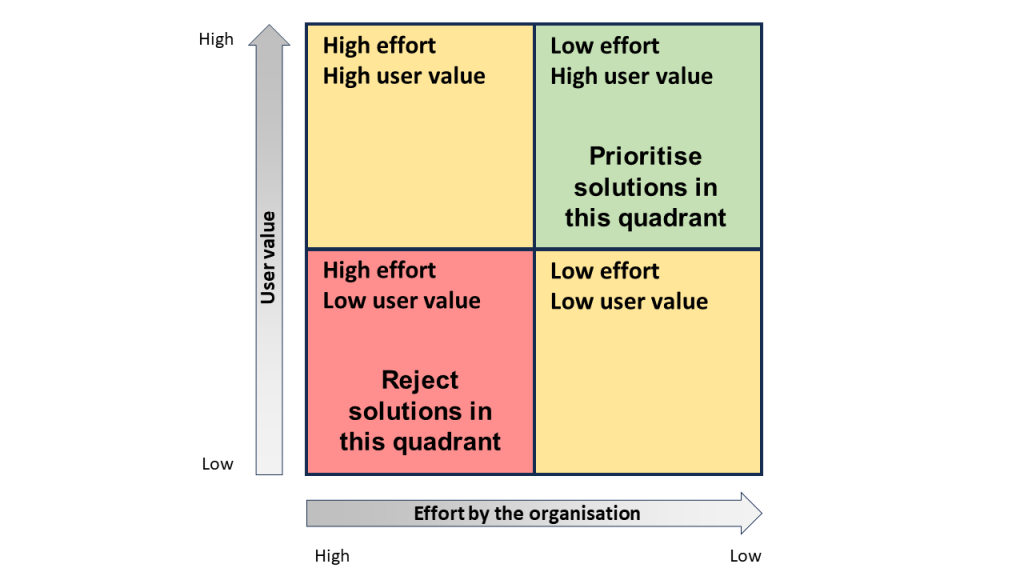

I wrote about prioritization techniques in a separate piece, so I’ll stay high-level here. The approach I want to add to MoSCoW is a value/effort matrix, which gives you the sequencing inputs that MoSCoW lacks.

The logic is simple:

Draw a 2x2. X-axis is effort (high to low). Y-axis is value (low to high). Plot your stories or epics.

The quadrants:

High value, low effort - do these first. They are your quick wins and your early momentum builders.

High value, high effort - plan these carefully. They’re your core investments. Understand dependencies and technical risk before committing.

Low value, low effort - defer or deprioritize. Easy to do, but not important.

Low value, high effort - cut or move to the Won’t have bucket. These are traps.

For Ironfall, the core combat loop stories (dodge, attack, stamina management) are high value and, assuming the team has done some prototyping, manageable effort. They go first. A cosmetic equipment preview UI might be low value and relatively low effort - nice to have, but not blocking anything. An elaborate multiplayer invitation system would be high effort and, for this game, low value. That one probably won’t be implemented.

The value dimension here is player value as filtered through the creative pillars. Stories that directly advance the core experience the game is trying to create are high value. Stories that are tangential, nice-to-have or system-level (important, but not directly experienced by the player) sit lower on the value axis and should be sequenced accordingly.

One thing I want to name directly: prioritization is not a formula. MoSCoW and the value/effort matrix are tools for structuring a conversation, not algorithms that produce a right answer. The actual prioritization decisions are made by people, using judgment, with the vision and the creative pillars as their primary reference. If a story advances the pillars, that is evidence for priority. If a story doesn’t clearly connect to the pillars or the milestones, that is a question worth asking.

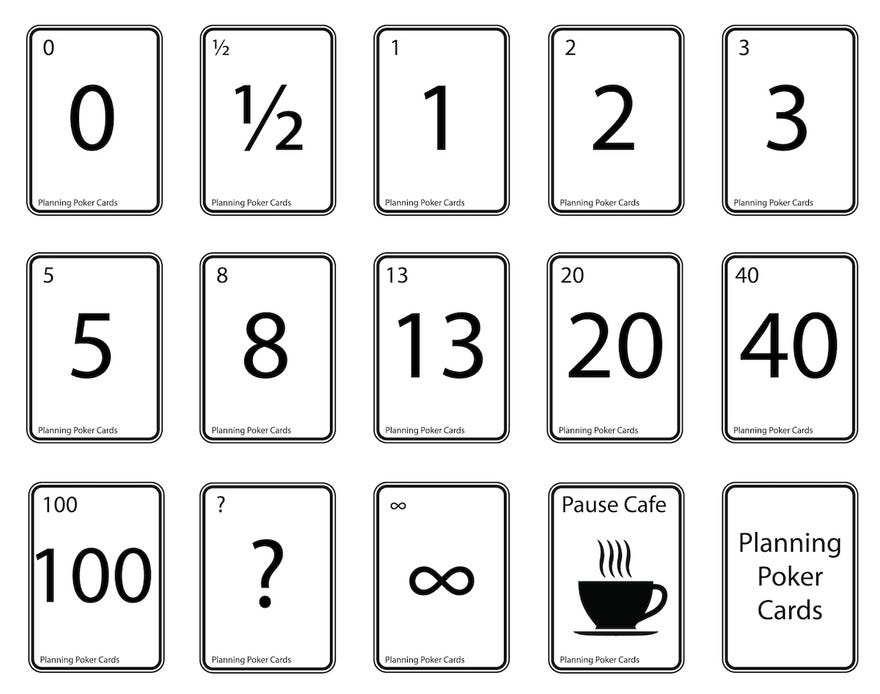

Step nine: estimate the backlog

Estimation is one of the most discussed and least-understood topics in software and game development. Entire books have been written on it. Mike Cohn’s Agile Estimating and Planning is probably one of the most practical. I’ll give you the version that I believe applies to small indie teams.

First, a distinction that matters: estimating is not scheduling. Estimating is about understanding the relative size and complexity of work. Scheduling is about determining when that work can realistically happen. Confusing the two is one of the most common sources of planning dysfunction I've seen.

Story points vs. hours

In agile, using story points is a common way of estimating work. Story points are a relative measure of complexity, effort and uncertainty - not time. The key word here is relative. You’re not saying “this takes three hours.” You’re saying “this is about twice as complex as that.” That distinction matters because us humans are not great at estimating absolute time but reasonably good at comparing things to each other. Ask a developer how long a feature will take and they’ll give you a number that’s very often wrong. Ask them whether it’s more or less complex than something they already built and they’ll give you something you can actually use.

In practice, you calibrate against a reference story. For Ironfall, the team might agree early on that implementing the basic health bar UI is a 1 - it’s well-understood, straightforward, no surprises expected. From there, everything else gets estimated relative to that. The combat parry mechanic - with its input window, animation state machine, hit detection timing and feedback triggers - is probably a 5 or a 6. The full dialogue system, with branching logic, localization hooks, speaker portraits and integration into the narrative state manager, is probably an 8, maybe more, and the team should talk about whether it needs to be broken down further before anyone starts work on it. An 8 is almost always hiding a conversation that hasn’t happened yet.

Hours are an absolute measure of time. Unless they have a great deal of experience with the subject and working together, teams that estimate in hours tend to be optimistic about productivity (they forget meetings, context-switching, interruptions and the basic reality that development work rarely goes exactly as planned).

For small indie teams, I generally recommend estimating in story points using the Fibonacci sequence (1, 2, 3, 5, 8, 13) because the gaps between numbers on the Fibonacci sequence reflect something true about uncertainty: the bigger a piece of work, the less confident you should be in your estimate, and the Fibonacci gaps widen to reflect that.

The Fibonacci values:

1 - trivial, very well understood, no surprises expected

2 - simple, clear, minimal uncertainty

3 - moderate complexity, some unknowns

5 - meaningful complexity, requires careful thought

8 - large and/or uncertain, should consider splitting

13 - too big or too uncertain to work on directly

If your team estimates a story at 13, that is the estimate telling you to do more discovery work before attempting the story, not a signal to put it in the sprint anyway.

Planning poker

The practical mechanism for team estimation is planning poker, or some informal version of it. The team gathers around the backlog, someone reads a story, everyone thinks about it briefly and then simultaneously reveals their estimate. Differences trigger a conversation: why did the engineer think 5 and the designer think 2? Usually it's because they're imagining different implementations or one of them knows something the others don't. That conversation is the real value. The estimate that results is a product of shared understanding, not individual guesswork.

For a five-person team at Ironfall, planning poker might happen online or in person. The important thing is that estimation is done together and that estimates are not assigned by one person to another. A developer estimating someone else's work has limited information. Estimation is a collaborative act.

For transparency, there are many other ways of estimating the backlog. I like this one because it’s proven and highly collaborative. As with so many of these activities: the real value is in the dialog between teammates.

Velocity

After your first two or three sprints, you’ll start to have a sense of your team’s velocity: the average number of story points completed per sprint. This is the number that drives your release planning.

If the Ironfall team typically completes about 30 points per two-week sprint and the backlog for MVP 1 contains 180 points of Ready stories, you’re looking at roughly six sprints to complete MVP 1 - about twelve weeks of calendar time. That’s probabilistic, not deterministic. Some sprints will be better, some worse. But it gives you a real number to plan against, derived from actual team performance rather than optimistic assumptions.

This is very different from building a schedule by adding up individual estimates and multiplying by a confidence factor. That kind of schedule-building is essentially hope formatted as a spreadsheet. Velocity-based forecasting is empirical. It says: here is what this team has actually demonstrated they can do and here is what the math suggests about the future. With that comes appropriate uncertainty and honest conversation about it.

One thing I want to draw attention to: early velocity numbers are less reliable than later ones. In the first sprint or two, the team is still getting oriented, the tooling isn't fully set up, the stories might not be as well-defined as they'll be later. Don't anchor to early velocity numbers too aggressively. Let them stabilize over three or four sprints before making hard forecasts against them.

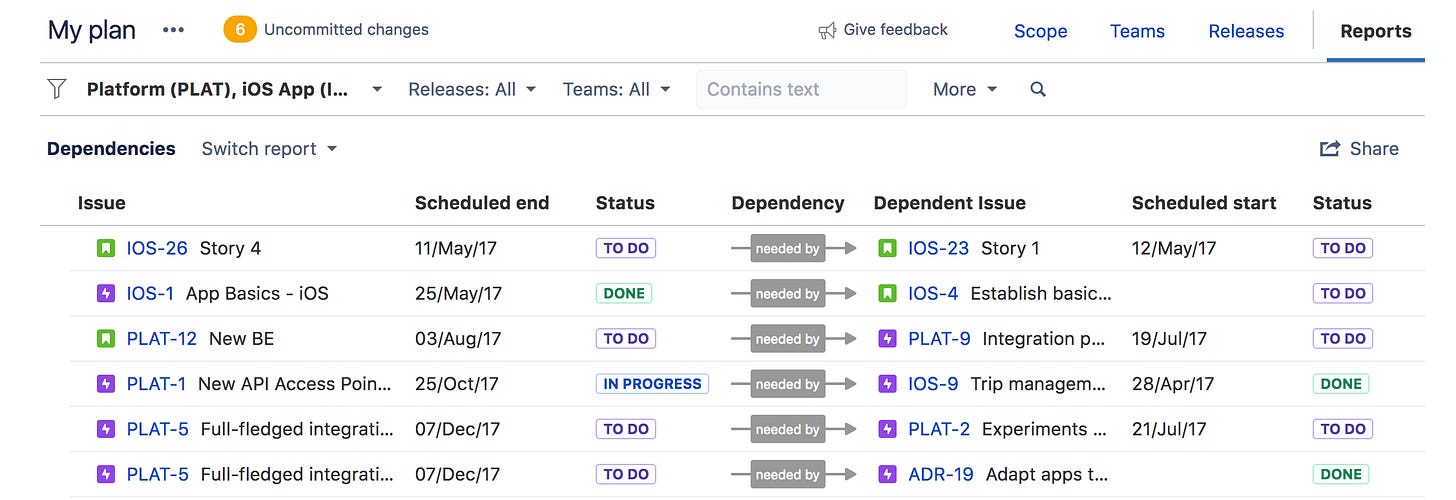

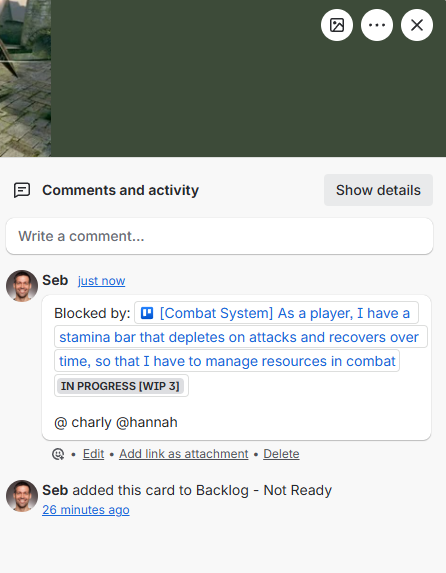

Step ten: note dependencies

Dependencies are probably the most under-documented aspect of backlog planning in small teams. And they are one of the most reliable sources of blocked work, wasted time and invisible critical path problems.

A dependency exists when story B cannot be started (or completed) until story A is done. These come in several varieties:

Technical dependencies - the most common. A feature can’t be implemented until a system it relies on exists. In Ironfall, enemy AI behaviors can’t be polished until the enemy health and damage system is implemented. Enemy encounters can’t be designed in levels until the enemy AI behaviors exist.

Asset dependencies - common in games and often underestimated. The combat animation system is only testable once the combat animations exist. The audio implementation for combat feedback can’t happen until there’s placeholder audio, at minimum.

Design dependencies - a systems design document needs to exist before the engineering implementation begins, or the engineer is building against assumptions that may not match what the designer intended.

External dependencies - work that is blocked on something outside the team. A middleware integration blocked on a license. An outsourced asset package blocked on vendor delivery.

What this looks like in practice: you pull a story into a sprint, the engineer starts work and somewhere around day two or three they discover they can't finish it because something else isn't done. The story gets pushed. The sprint fails to complete. Velocity suffers. Morale takes a small, unnecessary hit - and the frustrating thing is that the block was visible in advance if anyone had mapped it. That's the cost of undocumented dependencies, and it compounds across a production.

At the roadmap level, think about inter-Epic dependencies. If the Progression System Epic depends on the Combat System Epic being stable first, that dependency should be visible on your roadmap. It shapes sequencing at the macro level just as story-level dependencies shape sprint planning at the micro level.

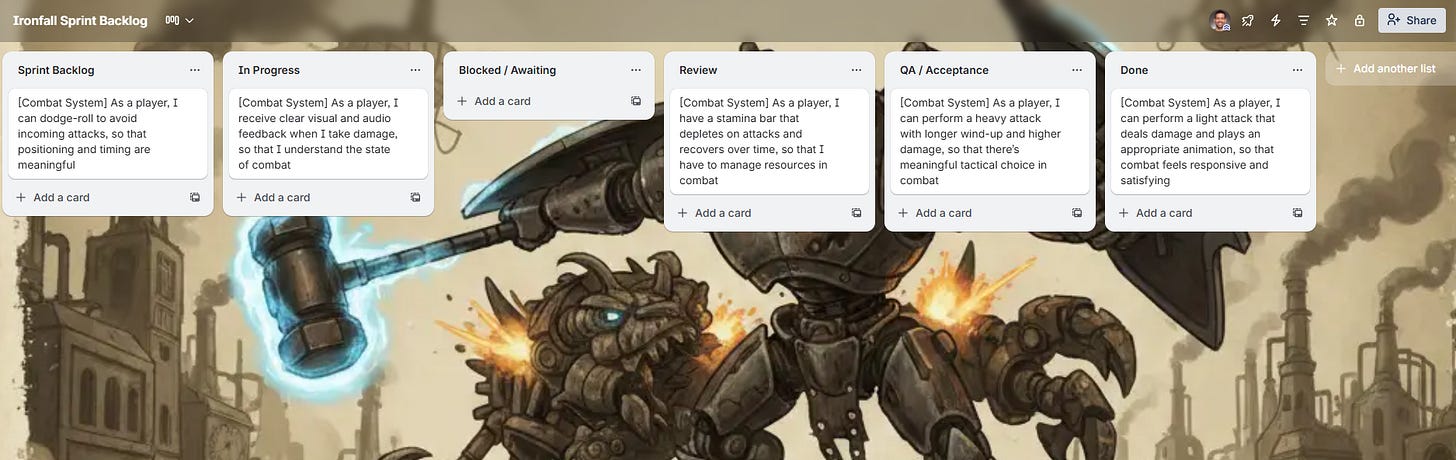

Step eleven: visualize effort by discipline

One of the planning blind spots I see most often in small teams is aggregating all the work together without looking at how that work breaks down by discipline. A backlog that looks perfectly sized at the aggregate level can contain brutal imbalances - one person overloaded while another is waiting for work.

Let’s use Ironfall again as an example. Say the work is for MVP 1. After you’ve written and estimated all the stories, you take a pass at mapping them to discipline: Engineering, Art, Design, Audio, Narrative (or whatever disciplines your specific team has). Add up the estimated points per discipline.

Something like:

Now cross-reference with your team composition and availability. If you have two engineers and one artist, the engineering work is distributed across two people while the art load falls entirely on one. Given your sprint velocity, is the art workload realistic in the MVP 1 timeframe alongside the engineering dependencies that require art to unblock them?

If the answer is no, you have three options: reduce scope (cut or defer art-heavy stories), increase art capacity (outsource, or bring in support) or extend the timeline. Those are real tradeoffs, and this analysis is how you see them before they surface as a production crisis. A simple spreadsheet works fine for this - it doesn't require sophisticated tooling.

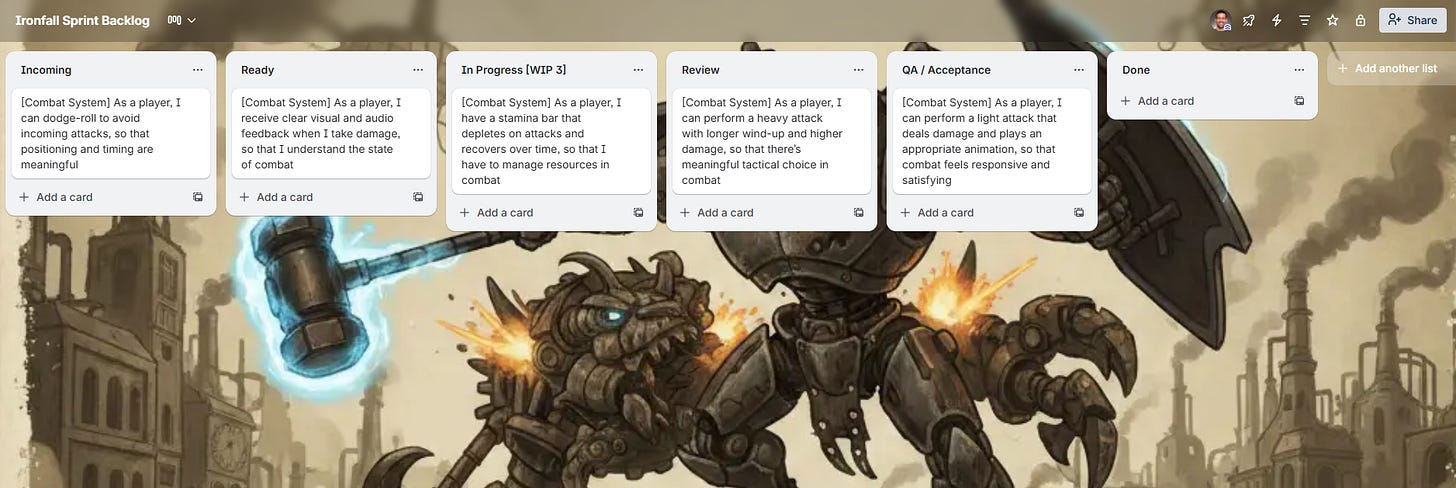

A simple and practical Trello setup

I’ve been referencing Trello throughout, so let me be a little more specific about how I’d set it up for Ironfall.

A quick disclaimer: Trello is one of dozens of tools that can do this job. Jira, Linear, Shortcut, Notion, Height, ClickUp - any of them can accommodate this structure. I'm using Trello here because it's free, simple and a good teaching example. Don't let tool selection become a two-week conversation. Pick something, set it up, start working.

Board 1: Roadmap

One board, big picture. Lists represent phases or major milestones. Cards represent key deliverables, milestones and major dependencies. Think of it as your strategic view - the shape of the whole project at a glance. Each card at this level represents a milestone or major deliverable, not a user story. You update this board when major things change and review it at each milestone. Daily work doesn't live here.

Board 2: Backlog

This is where stories live before they enter active development. Structure it as lists representing the state of readiness: Epics (not yet broken down), Icebox (broken down but not yet prioritized), Ready (written, estimated, acceptance criteria defined, no blocking dependencies) and Archived (cut or deferred). A story moves from left to right as it becomes more ready to work on.

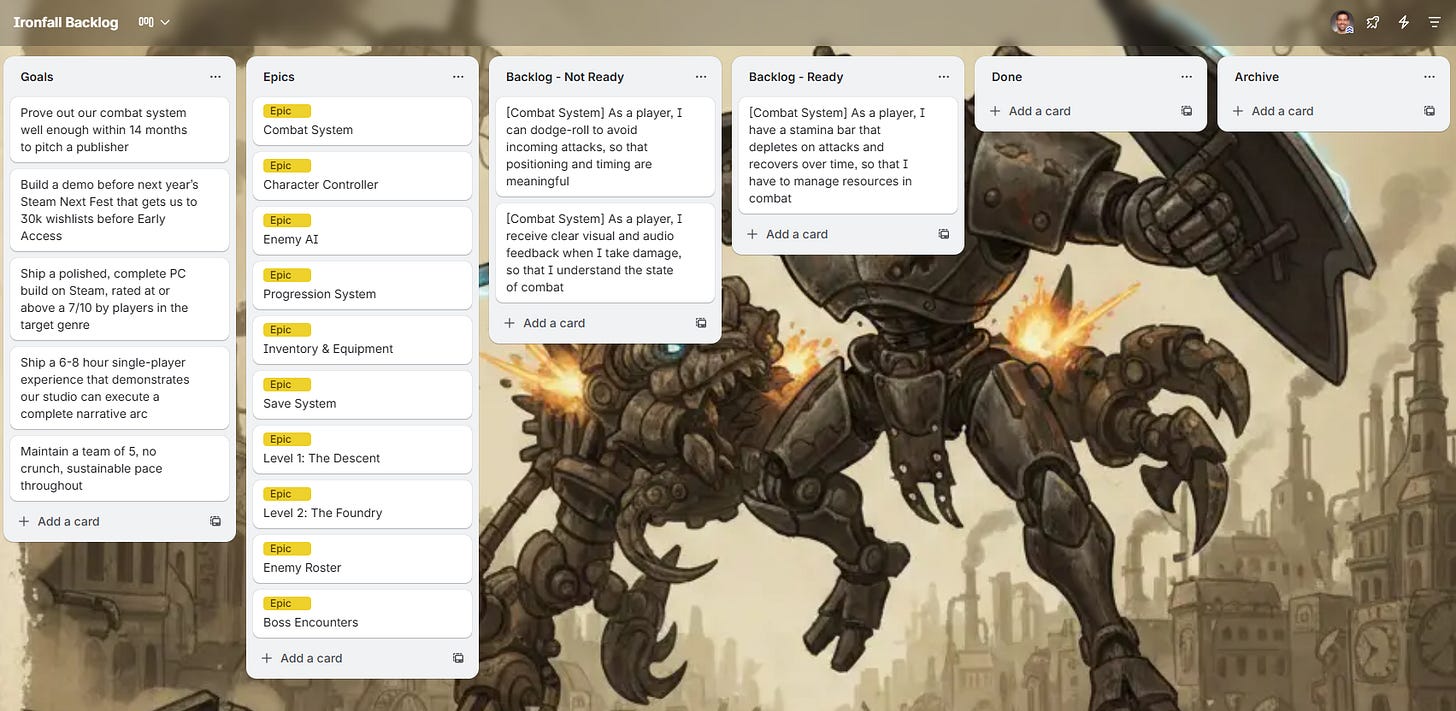

Board 3: Sprint Board (if using Scrum) or Kanban Board (if using Kanban)

This is where active work lives.

For Scrum, the lists are: Sprint Backlog (committed this sprint), In Progress, In Review and Done. A new sprint means either a new board or a reset of this one. Cards in Done at the end of the sprint have met the Definition of Done - not just been declared finished by the developer working on them.

For Kanban, the lists are: Ready (to pull from), In Progress, Blocked (optional), In Review and Done. Work flows continuously. A WIP limit on the In Progress column - something like 6-8 active stories for a five-person team - keeps the board honest and surfaces blockers before they compound.

Card anatomy (every story card should have):

Title: The user story statement

Priority tier: Must, Should, Could

Milestone: MVP 1, MVP 2, etc.

Estimate: in story points

Description: Context, design notes, links to relevant docs

Definition of Done: copy/paste it each time

Acceptance Criteria (as a checklist): specific, evaluable conditions

Tasks (in comments): specific technical sub-tasks (optional, but useful for complex stories)

Discipline labels: Engineering, Art, Design, Audio, Narrative, etc.

Members: @ whoever is working on this

Due date: Target completion, if applicable

Attachments: Links to design docs, reference images, spec sheets

For dependency tracking in Trello specifically, since Trello doesn’t have native dependency linking, I use a simple convention: in the card comments, I add a comment named “Blocked by:” listing the card titles and links of blocking stories. When a blocking story moves to Done, the developer checks their cards to see what they’ve unblocked, and updates accordingly.

It’s not perfect. But it’s visible, shared and costs nothing.

Getting started with your first sprint or initial Kanban body of work

You've written the vision. You've built the roadmap. You've defined the milestones. You've created the backlog, written the stories, added acceptance criteria, estimated and prioritized. Dependencies are mapped. Now: how do you actually start?

If you’re using Scrum:

Pull the highest-priority, dependency-clear, fully Ready stories from the backlog that your team can realistically complete in two weeks. Target roughly your expected sprint velocity, but since you don’t have a velocity yet, you’ll have to make an educated guess. For Ironfall’s five-person team, starting conservatively at around 25 points is reasonable guesstimate for the first sprint.

Hold a sprint planning session. Walk through each story. Confirm understanding of acceptance criteria. Break down tasks for each story. Assign initial ownership. Confirm that everyone knows what they’re doing on day one.

At the end of the sprint, hold a sprint review, demo what was completed, evaluate against acceptance criteria, accept or reject stories, and have a retrospective: what worked, what didn’t, what do we change? These are not optional ceremonies. The retrospective especially is one of the highest-leverage improvement mechanisms available to small teams and I will forever agree with Clinton Keith’s framing that the willingness to surface and act on honest team feedback is what separates studios that get better from studios that just keep going.

If you’re using Kanban:

Select a body of work from the top of the Ready backlog. How much? Enough to fill the team’s current capacity without exceeding the WIP limit. For Ironfall with five people, a WIP limit of around 6-8 active stories across the team is a reasonable starting point: enough that no one is idle, not so many that everything is in progress simultaneously and nothing is completing.

Work flows through the Kanban board continuously. When a card moves to Done and is accepted, a new card can be pulled from Ready. The team meets regularly - at least twice a week for a small indie team - to review the board, surface blockers and discuss upcoming work.

Kanban’s strength is its flexibility for work that doesn’t fit neatly into two-week boxes. If your development model is more continuous than sprint-shaped or if you’re in a phase like closing or live operations where the work is highly variable, Kanban is often the better fit. In the production methodologies article, I talk in more depth about when to choose Scrum, Kanban or a hybrid, that’s the place to go for a more complete discussion of the tradeoff.

What the first week should not look like:

The first week should not look like continuing to plan. The first week should look like building. If you've done the work above - vision, roadmap, milestones, backlog, prioritization, estimation, dependency mapping - you have enough to start.

The plan will evolve. It always does. That’s fine. The agile manifesto’s point about responding to change over following a plan doesn’t mean the plan was wasted. It means the plan did its job by getting you oriented and moving and now it serves as the thing you update when reality arrives.

A note on what you haven’t planned yet

If you’ve worked through everything above, you have a solid project plan foundation. But I want to touch briefly on what isn’t in here, because the full picture of a production-ready project plan is larger than what we covered. And we covered a lot.

Staffing planning: how you’ll build and manage the team over time is its own topic, with its own timing considerations and tradeoffs. You can learn more about it here and here.

Risk planning: how you identify, document and respond to the things that could go wrong is something I’d argue is as important as any of the above. I wrote about this in the PMP best practices piece and covered risk registers in more depth separately (below). The short version: if you don’t have a risk register by the time you start your first sprint, you should.

Quality planning: your testing approach, standards, processes and tooling needs to be decided before you’re in production dealing with a bug backlog that nobody planned for. I also wrote an article about this. Feel free to check it out.

Communication planning, financial planning, team chartering and role definitions all belong in the full picture too. I still haven’t got to these.

None of these is optional. But none of them should block you from getting started on what we covered here. Do them in parallel, not instead.

What planning actually does

I want to close on something that’s easy to miss if you approach planning as a compliance exercise.

Planning is not primarily about producing documents. It is about generating shared understanding. When you and your team go through the work of writing stories, estimating them together, mapping dependencies, choosing what goes first and why - you’re not creating a backlog. You’re having the conversations that would have happened anyway, but on a bad timeline: in the middle of a sprint, under pressure, when someone discovers that two people had completely different mental models of how a system was supposed to work.

The plan surfaces misalignment early, when it’s cheap. It surfaces ambiguity early, when it’s resolvable. It creates a shared language for talking about the work and a shared reference for making decisions.

The Ironfall team, at the end of a well-run planning process, doesn't just have a Trello board. They have a shared picture of what they're building, in what order, to what standard and why. When something goes wrong - and something will go wrong - they have a foundation from which to respond rather than just react.

That’s what a good plan does. It doesn’t prevent uncertainty. It gives you a coherent place to stand when uncertainty arrives.

If this was useful, you might also like:

Welcome to Substack, Sebastian.

This is the article I wish I had access to when I first started. Nobody tells you how to produce, how to manage a project: you could do a CAPM and it would count for nonce, because games aren't software development. They aren't VFX or animation either. They're a marriage of all those things and far more, and what makes them fly is the capriciousness of the human condition: the thing that separates a brilliant game from a mundane one and that no framework can fully account for.

In all of this, we have, us, the producers. The suckers holding this kindergarten full of PhDs together.

I'm passing this along to every producer I work with.